Error resilient monte carlo methods on quantum computers

Quantum computing promises improvements in many areas, from chemistry to healthcare to finance. The current investment boost in the field gives hope that quantum computers will become reality in business workflows sometime this decade. But how far are we really from the first productive use of quantum computers? We will sketch the state of the field by considering an example application from risk management. The application is the sensitivity analysis of a proprietary business risk model. (See more information here:

A Quantum Algorithm for the Sensitivity Analysis of Business Risks (https://arxiv.org/abs/2103.05475)

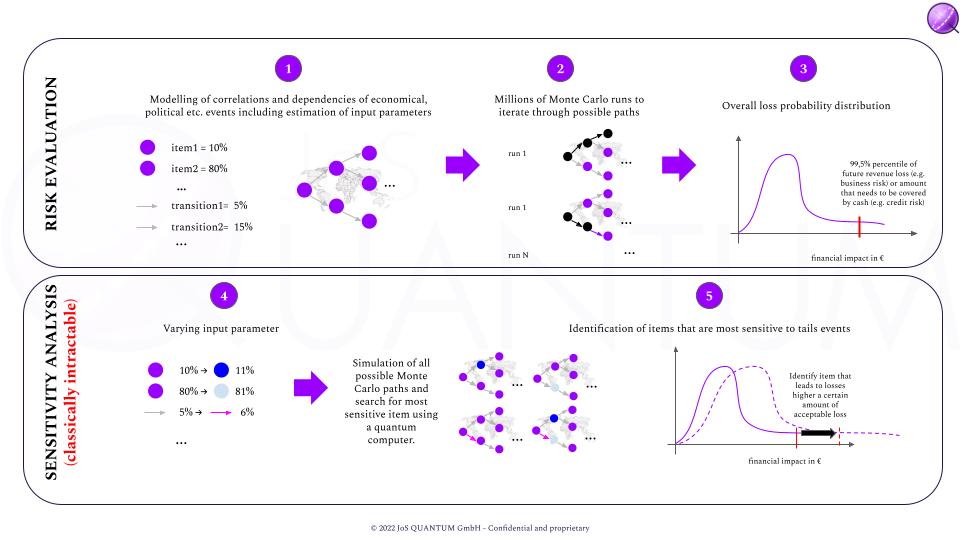

In this risk model, a collection of risk factors, which can affect each other, influences the revenue of the business. This is used to produce an overview over the possible losses in revenues for the next year, and to attach a probability to those losses. The key question is, of course: how likely are scenarios which might be devastating for the company? Once the parameters are estimated, the simulation of the model on modern high-performance computers takes 15 minutes of computing time. If we now change one of the risk parameters and want to see how this change affects the output of the model, we have to wait for another 15 minutes. In practice, there will be between 100 and 200 such parameters. So testing the effect of changing each parameter by a certain, small amount might cost us a couple of days of computer time. If we want to test 10 alternative values for each parameter, we are talking about weeks of calculation time. And if we want to take into account simultaneous changes of two or more parameters, we will have to wait for decades for the results.

Outline of the sensitivity analysis of a risk model.

Outline of the sensitivity analysis of a risk model.This very model and its simulation can be implemented on a quantum computer. A suitable quantum computer would have to have about 200 qubits, and it could perform the calculation of the risk model, which took more than a decade of calculation time on a classical high performance computer, in less than an hour. Unfortunately, a quantum computer suitable for this task does not exist right now. For a quantum computer to successfully execute the calculation sketched above, each operation of the quantum calculation would have to be executed successfully – that is, without an error – with a probability of roughly 99.999999%. The best rates in current quantum computers are about 99.9%, called fidelity. In other words, the error rate will have to come down by a factor in the order of 100,000 before quantum computers could take on these calculations, which are currently impossible to perform. That certainly will not happen within the next couple of years.

Low depth Quantum Amplitude Estimation with serial execution of operators on the same qubit register

The natural question is: are there methods to run quantum programs, such as the one for the risk model described above, on error-prone, near term quantum computers? In our recent work published as a preprint on arxiv (Error Resilient Quantum Amplitude Estimation from Parallel Quantum Phase Estimation), we present a new method to do just this. Our approach parallelizes the quantum algorithm and this, it turns out, has the effect of reducing the destructive effect of errors.

Low depth parallel and error resilient Quantum Amplitude Estimation

Depending on the requirements, the current quality of quantum computing hardware may even be sufficient for practical applications. However, the improvement comes at a cost: the number of qubits will have to increase by a factor of 100 at the very least. The good news is that the roadmaps of the hardware providers, such as IBM, IonQ, Rigetti indicate that the number of qubits their quantum computers will grow quickly over the next few years. As an indication, 1,000 (noisy) qubits are expected to be reached next year already! Here are some roadmaps:

https://ionq.com/posts/december-09-2020-scaling-quantum-computer-roadmap

https://www.rigetti.com/uploads/Rigetti-Investor-Presentation.pdf

So, to summarize: there are still some hurdles on the way to productive use of quantum computers. However, the latest developments give hope that quantum computing will be adopted for business use within a few years time, even if the error rates do not drop by many orders of magnitude until then.